Rails Ruby Bench Speed Roundup, 2.0 Through 2.6

/Back in 2017, I gave a RubyKaigi talk tracing Ruby’s performance on Rails Ruby Bench up to that point. I’m still pretty proud of that talk!

But I haven’t kept the information up to date, and there was never a simple go-to blog post with the same information. So let’s give the (for now) current roundup - how well do all the various Rubies do at big concurrent Rails performance? How far has performance come in the last few years?

Plus, this now exists where I can link to it 😀

How I Measure

My primary M.O. has been pretty similar for a couple of years. I run Rails Ruby Bench, a big concurrent Rails benchmark based on Discourse, commonly-deployed open-source forum software that uses Rails. I run 10 processes and 60 threads on an Amazon EC2 m4.2xlarge dedicated instance, then seen how fast I can run a lot of pseudorandom generated HTTP requests through it. This is basically the same as most results you’ve seen on this blog. It’s also what you’ll see in the RubyKaigi talk above if you watch it.

For this post, I’m going to give everything in throughputs - that is, how many requests/second the test gives overall. I’m giving them in two graphs - measured against Discourse 1.5 for older Ruby, and measured against Discourse 1.8 for newer Ruby. One of the problems with macrobenchmarks is that there are basically always compatibility issues - old Discourse won’t work with newer Ruby, 1.8 works with most Rubies but is starting to show its age, and beyond 2.6 it’s really time for me to start measuring against even newer Discourse — which is why you’re getting this post, since it will be hard to compare Rubies side-by-side and it’s useful to have an “up to now” record. Plus I have awhile until Ruby 2.7, so this gives me extra time to get it all working 😊

The new data here - everything based on Discourse 1.8 - is based on 30 batches/Ruby of 30,000 HTTP requests per batch. For the Ruby versions I ran, the whole thing takes in the neighborhood of 12 hours. The older Discourse 1.5 data is much coarser, with 20 batches of 3,000 HTTP requests per Ruby version. My standards have come up a fair bit in the last two years?

Older Discourse, Older Ruby

First off, what did we see when measuring with the older Discourse version? This was in the RubyKaigi talk, so let’s look at that data. Here’s a graph showing the measured throughputs.

That’s a decent increase between 2.0.0 and 2.3.4.

And here’s a table with the data.

| Ruby Version | Throughput (reqs/sec) | Speed vs 2.0.0 |

|---|---|---|

| 2.0.0 | 127.6 | 100% |

| 2.1.10 | 168.3 | 132% |

| 2.2.7 | 187.7 | 147% |

| 2.3.4 | 190.3 | 149% |

So that’s about a 49% speed increase from Ruby 2.0.0 to 2.3.4 — keeping in mind that you can’t perfectly capture “Ruby version X is Y% faster than version Z.” It’s always a somewhat complicated approximation, for a specific use case.

Newer Numbers

Those numbers were measured with Discourse 1.5, which worked from about Ruby 2.0 to 2.3. But for newer Rubies, I switched to at-the-time-new Discourse 1.8… which had slower HTTP processing, at least for my test. That’s fine. It’s a benchmark, not optimizing a use case for a real business. But it’s important to check how much slower or we can’t compare newer Rubies to older ones. Luckily, Ruby 2.3.4 will run both Discourse 1.5 and 1.8, so we can compare the two.

One thing I have learned repeatedly: running the same test on two different pieces of hardware, even very similar ones (e.g. two different m4.2xlarge dedicated EC2 instances) will give noticeably different results. I’m often checking 10%, 5% or 1% speed differences. I can’t save old results and check against new results on a new instance. Different EC2 instances frequently vary by 1% or more between them, even freshly spun-up. So instead I grab a new instance and re-run the results with the new variables thrown in.

For example, this time I re-ran all the Discourse 1.8 results, everything from Ruby 2.3.4 up to 2.6.0, on a new instance. I also checked a few intermediate Ruby versions, not just the highest current micro version for each minor version - it’s not guaranteed that speed won’t change across a minor version (e.g. Ruby 2.3.X or Ruby 2.5.X) even though that’s usually basically true.

That also let me unify a lot of little individual blog posts that are hard to understand as a group (for me too, not just for you!) It’s always better to run everything all at once, to make sure everything is compared side-by-side. Multiple results over months or years have too many small things that can change - OS and software versions, Ruby commits and patches, network conditions and hardware available…

So: this was one huge run of all the recent Ruby versions on the same disk image, OS, hardware and so on. Each Ruby version is different from the others, of course.

Newer Graphs

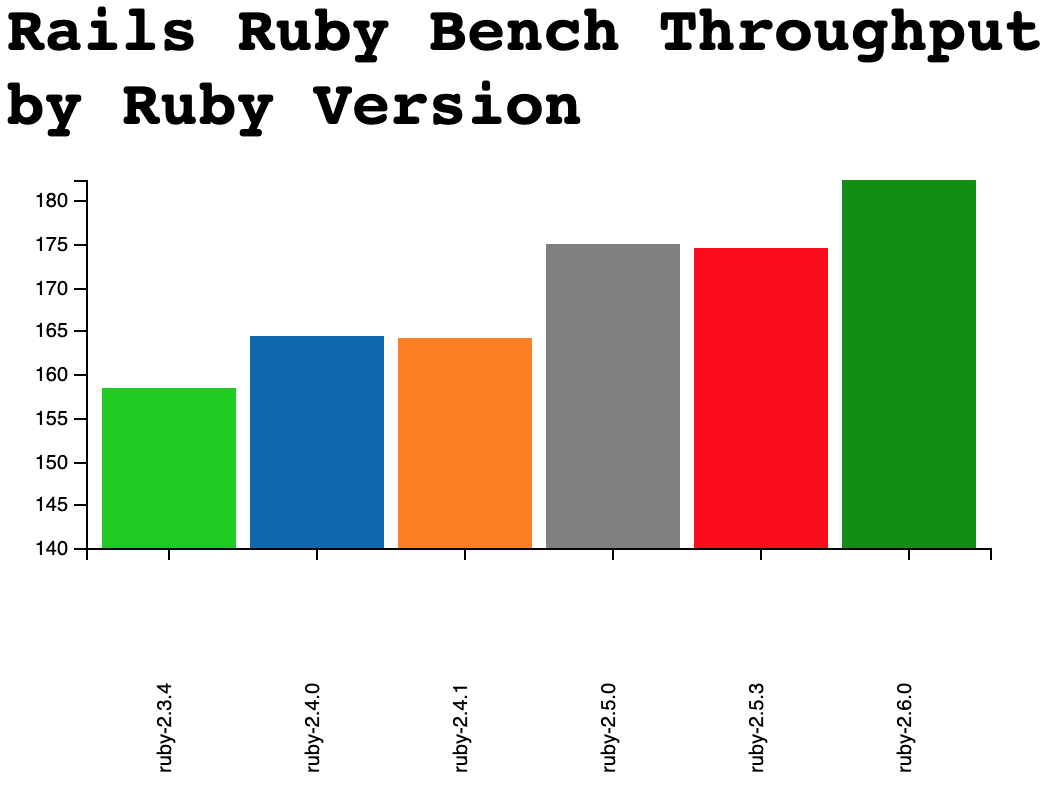

Let’s look at that newer data and see what there is to see about it:

Yeah, it’s in a different color scheme. Sorry.

Up and to the right, that’s nice. Here’s the same data in table form:

| Ruby Version | Throughput (reqs/sec) | Variance in Throughput | Speed vs 2.3.4 |

|---|---|---|---|

| 2.3.4 | 158.3 | 0.6 | 100.0% |

| 2.4.0 | 164.3 | 1.1 | 103.8% |

| 2.4.1 | 164.1 | 1.5 | 103.7% |

| 2.5.0 | 175.1 | 0.8 | 110.6% |

| 2.5.3 | 174.4 | 1.4 | 110.2% |

| 2.6.0 | 182.3 | 0.8 | 115.2% |

You can see that the baseline throughput for 2.3.4 is lower - it’s dropped from 190.3 reqs/sec to 158.3 — in the neighborhood of a 20% drop in speed, solely due to Discourse version. I’m assuming the same ratio is true for comparing Discourse 1.8 and 1.5 since we can’t directly compare new Rubies on 1.5 or old Rubies on 1.8 without patching the code pretty extensively.

You can also see tiny drops in speed from 2.4.0 to 2.4.1 and 2.5.0 to 2.5.3 - they’re well within the margin of error, given the variance you see there. It’s nice to see that they’re so close, given how often I assume that every micro version within a minor version is about the same speed!

I’m seeing a surprising speedup between Ruby 2.5 and 2.6 - I didn’t find a significant speedup when I measured before, and here it’s around 5%. But I’ve run this benchmark more than once and seen the result. I’m not sure what changed - I’m using the same Git tag for 2.6 that I have been[1]. So: not sure what’s different, but 2.6 is showing up as distinguishably faster in these tests - you can check the variances above to roughly estimate statistical significance (and/or email me or check the repo for raw data.)

If you’d like an easier-to-read graph, I have a version where I chopped the Y axis higher up, not at zero - it would be misleading for me to show that one first, but it’s better for eyeballing the differences:

Note that the Y axis starts at 140 - this is to check details, NOT get a reasonable overview.

Conclusions

If we assume we get a 49% speedup from Ruby 2.0.0 to 2.3.4 (see the Discourse 1.5 graph) and then multiply the speedups (they don’t directly add and subtract,) here’s what I’d say for “how fast is RRB for each Ruby?” based on most recent results:

| Ruby Version | Speed vs 2.0.0 |

|---|---|

| 2.0.0 | 100% |

| 2.1.10 | 132% |

| 2.2.7 | 147% |

| 2.3.4 | 149% |

| 2.4.0 | 155% |

| 2.4.1 | 155% |

| 2.5.0 | 165% |

| 2.5.3 | 164% |

| 2.6.0 | 172% |

For 2.6.1 and 2.6.2, I don’t see any patches that would cause it to be different from 2.6.0. That’s what I’ve seen in early testing as well. I think this is about how fast 2.6.X is going to stay. There are some interesting-looking memory patches for 2.7, but it’s too early to measure the specifics yet…

You’re likely also noticing diminishing returns here - 2.1 had a 32% speed gain, while I’m acting amazed at 2.6.0 getting an extra 6% (after multiplying - 6% relative to 2.0 is the same as 5% relative to 2.3.4 - performance math is a bit funny.) I don’t think we’re going to see a raw, non-JITted 10% boost on both of 2.7 and 2.8. And 10% twice would still only get us to around 208% for Ruby 2.8, even with funny performance math.

Overall, JIT is our big hope for achieving a 300% in the speed column in time for Ruby 3.0. And JIT hasn’t paid off this year for RRB, though we have high hopes for next year. There are also some special-case speedups like Guilds, but those will only help in certain cases - and RRB doesn’t look like one of those cases.

[1] There’s a small chance that I was unlucky when I ran this a couple of times with the release 2.6 and it just looked like it was the same speed as the prerelease. Or the way I did this in lots of small chunks (2.5.0 vs later 2.5 versus 2.6 preview vs later 2.6) hid a noticeable speedup because I was measuring too many small pieces? Or that I was significantly unlucky both times I ran this benchmark, more recently. It seems unlikely that the request-speed graphs I saw for 2.6 result in a 5% faster throughput - not least because I checked throughputs before, too, even though I graphed request speeds in those blog posts.